Stretch Robot Interface

My Role:

Interface designer (1 of 3); there was also 1 engineer on the team

Background

After the Turtlebots for Telemedicine project, we saw the need to improve the interaction between a doctor and patient. Our lab had just received a Stretch robot, a “mobile manipulator robot,” that has an arm the operator can control. The arm is very helpful to help the operator interact more with their environment, which can lead to a better experience. In addition to designing for physicians in the ED, our team also looked into designing for a second population: children who are homebound. Children who need to stay at home due to a medical illness receive a lower quality of education than other students, so we wanted to see if the Stretch could help these students attend school.

The Challenge

Designing a website-like interface for a robot that has an arm was a big challenge.

There were so many different degrees of freedom that my team and I had to account for:

The base of the robot could move forward and backwards, and could rotate left and right.

The head/camera of the robot could rotate almost 360 degrees, and look up and down.

The arm could move up and down the robot body, and could extend in and out.

The wrist could rotate 360 degrees in either direction.

The gripper could open and close.

That was a lot of degrees of freedom, so if the interface isn’t designed properly, then the operator might be overwhelmed by all the different ways the robot could move!

The Process

This is the first year in a 4-5 year study, so my team and I just laid the design groundwork for the rest of the study. We iterated on a lot of designs and worked through a lot of big questions along the way.

Finished Turtlebot interface. Initially, we were building our Stretch interface designs off of the interface design for the Turtlebot.

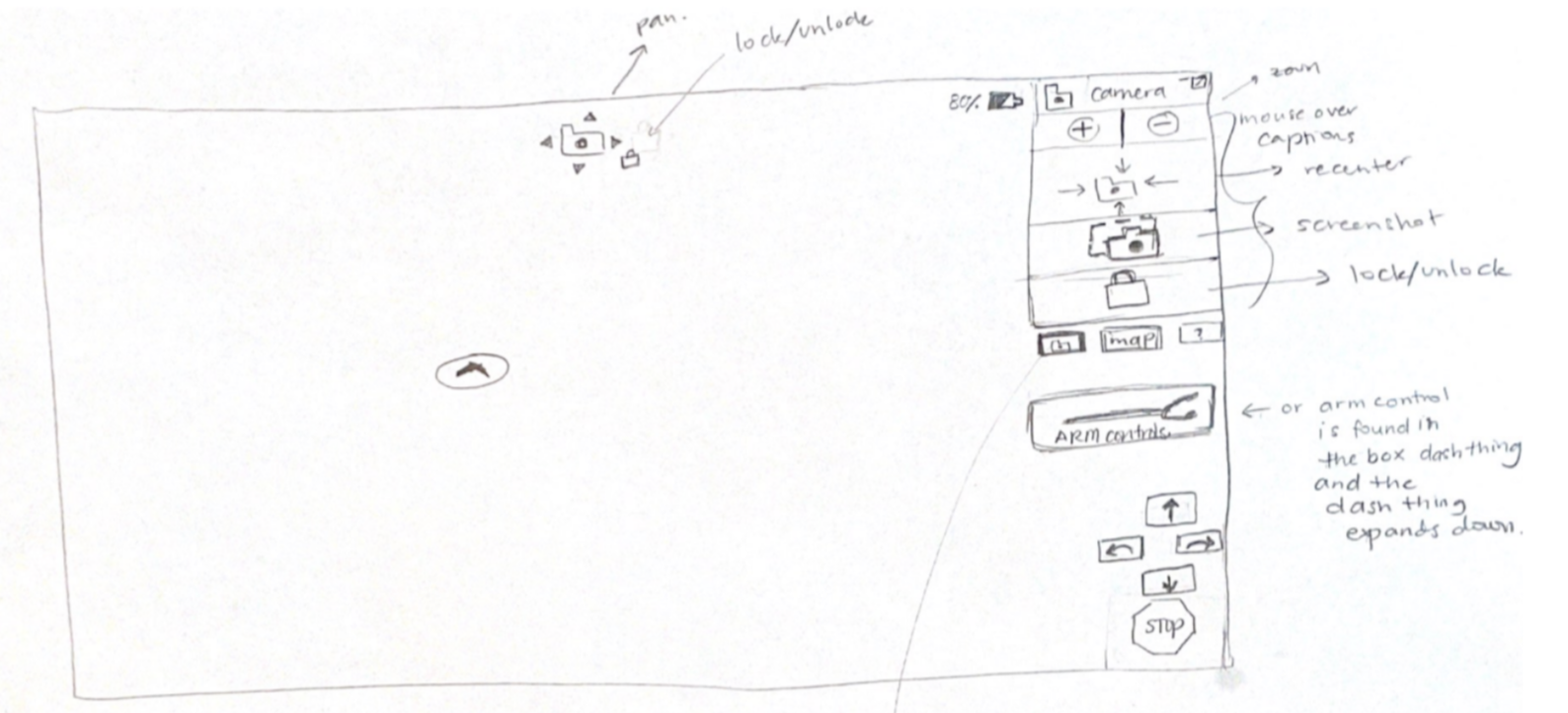

Initial sketches of the Stretch Interface:

An early iteration that explored the “click and go” method of shared control. This version relied more on automation, so the robot would be controlled similarly to how you control the screen in Google Earth’s interactive map.

This iteration played with different video feeds and putting a box on the robot base to store items.

The 2D robot control model.

We had 50+ other iterations exploring different ideas relating to shared control, what tools to display, and how to navigate controlling all the different elements of the robot.

My team and I were playing with different ways for the robot operator to know how the robot looks in the environment when they aren’t in the room to see it. It is hard to know what position the head, arm, and other parts of the robot are in when you are in the driver seat and aren’t co-located with the robot.

One of the ideas that came up is to have a 2D model of the robot that doubles as a way to control the different parts of the robot as well as a way to see what the robot might look like if you were to be in the same room as it.

The Result

We created a higher fidelity prototype of a design that has AR elements. Augmented reality would help the operator feel more immersed in the environment they are controlling the robot in.

“Playground” Prototype

One version of the prototype was customized to children who are homebound. This interface is more child friendly; there are bigger and more centralized controls, as well as more colors.

Simulation Center Prototype: navigation view

In the emergency department prototype, we worked through big questions such as “how do you know what direction you’re facing compared to the robot base’s direction?” I came up with this 2D visualization, similar to a compass, that replaced the robot model idea in the previous section. Located at the bottom right corner, this “compass” easily shows the operator when the camera is facing forward and when it is facing towards the arm.

Simulation Center Prototype: arm view

We added an arm camera to aid in picking objects up and increasing perception of the arm’s height.

Takeaways

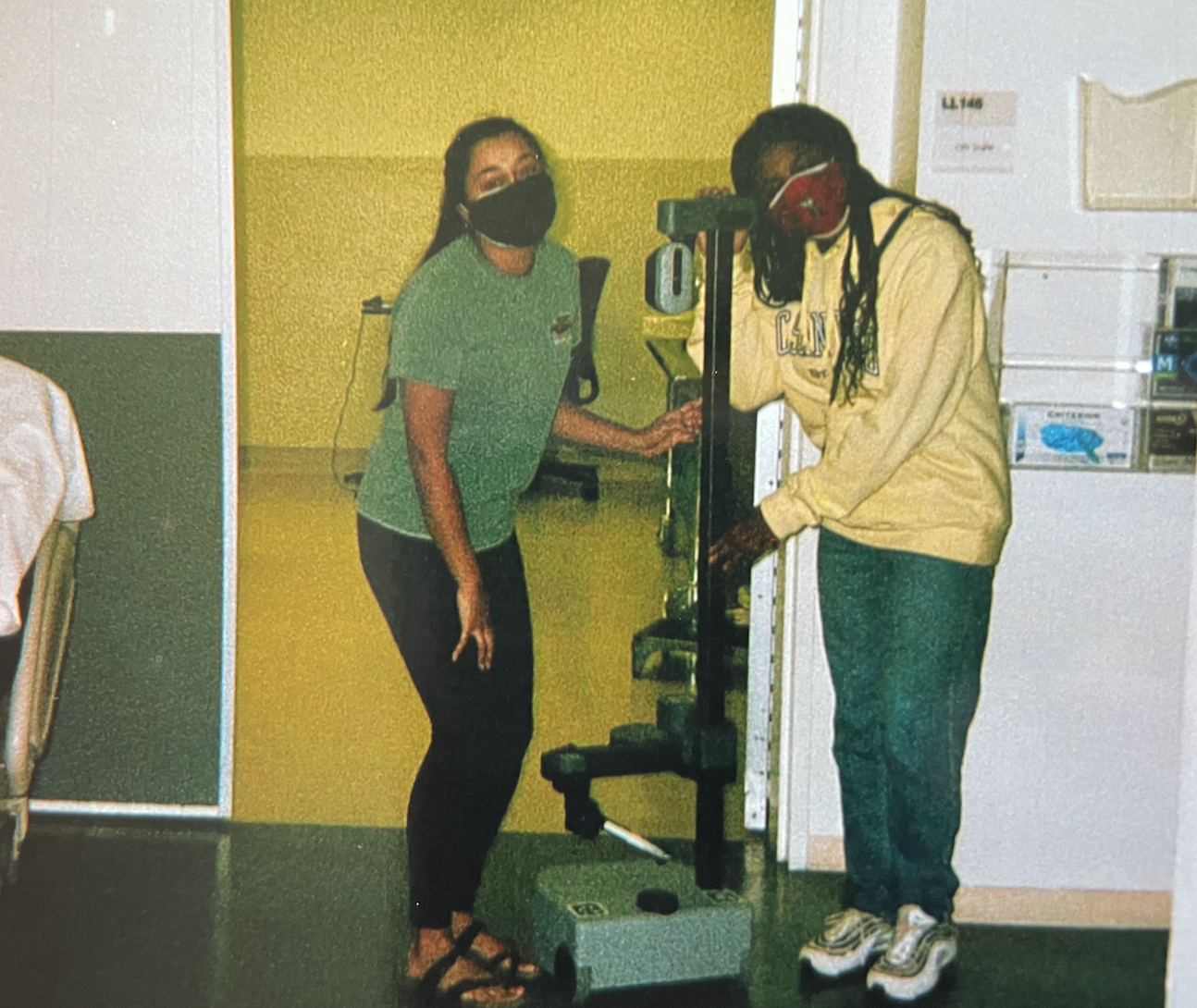

My lab-mate, Stretch robot, and I at the UCSD Simulation Center

What I learned from this process, is that it takes time to consider all the different factors and read what the literature says to compile all of that into an interface. In the industry setting, design is much more fast paced; however, in the academic setting, we slowed down to design in uncharted territory. There are not many previous studies on what the best design would be for a mobile manipulator, especially for the populations were chose to design for. I really grew to be a more patient designer and I hope to take this patience with me into the industry setting as I think through big questions rather than rushing to the next deliverable.